CEOs are reporting no productivity gains. Pilots are dying after 90 days. The issue isn't the AI. It's what you're feeding it.

Thousands of CEOs are reporting no measurable improvement in productivity from AI investment. Analysts are beginning to resurrect a paradox from the 1980s: why does transformational technology so often fail to move the numbers?

In residential real estate, the answer is visible on the floor of every portfolio management office. Budgets are flowing into AI platforms, analytics dashboards, and smart leasing tools — while the actual data those tools depend on sits trapped in three to five disconnected systems, formatted for accountants and trusted by no one.

The problem is not artificial intelligence. The models have improved dramatically. The problem is the infrastructure beneath them — the disconnected, incomplete, and often untrustworthy data layer that was never designed to support the operational decisions institutions now need to make at pace.

This report makes the case that fixing AI in residential real estate requires fixing the data architecture first. And the only durable way to do that is a purpose-built rental operating system — one that standardises every workflow, creates a single resident-first master record, and delivers a unified source of operational truth to every stakeholder in real time.

"AI doesn't fail because the models are bad. It fails because the data underneath is fragmented, siloed, and stuck behind inaccessible APIs." — Remen Okoruwa, Co-Founder and CEO of Propexo - Proptech data infrastructure for AI enablement

There is a growing gap between the AI ambition operators announce and the operational reality they quietly live with.

In residential real estate, this gap has a specific character. Operators invest in AI tools. They commission pilot projects. The demos look compelling. But somewhere between the proof of concept and production deployment, something breaks. The outputs are wrong. The recommendations don't match what the team sees on the ground. The AI hallucinates confidence from incomplete data. After 90 days, the pilot quietly dies.

"Nobody wants to talk about this because it's not sexy. 'We need better data infrastructure' doesn't get standing ovations at conferences. But it's the reason proptech AI pilots quietly die after 90 days. The companies that figure out their data layer first are going to run circles around everyone else. Not because they have better AI — because they actually have something to feed it." — Remen Okoruwa

The broader data confirms this is not a property-specific failure. Research from Fullview and others puts the rate of AI projects that fail to advance beyond pilot or deliver expected outcomes at between 70 and 85 per cent. Fivetran's 2025 enterprise survey found that 42 per cent of AI initiative failures are attributable specifically to data readiness issues — not model selection, not change management, not budget. Data.

The Fortune reporting on CEO sentiment tells the same story from the boardroom. Despite enormous investment in AI capability, the productivity needle has not moved in the way that was promised. Economists are debating whether this resembles the productivity paradox of the 1980s, when computers proliferated without generating the efficiency gains that theory predicted — until, eventually, business processes were redesigned around the technology. The lesson from that era: the technology alone is never sufficient. The infrastructure supporting it must be rebuilt.

"There are no shortcuts. Everyone's funding AI pilots now, but you can't ignore the fundamentals of data connection and cleaning, process redesign, change management, and measuring outcomes. In the next year we'll see a wave of 'AI didn't work' articles. And those examples won't be because AI itself failed — we'll see a separation of companies doing real transformation from those doing AI theatre." — Paul Weston, GM and VP Product at HubSpot

Institutional portfolios generate vast amounts of operational data every day. The problem is not scarcity. It is architecture.

Every lead enquiry, viewing, application, tenancy start, rent collection, maintenance request, renewal decision, and void period generates data. The information exists. The question is where it lives and what condition it is in by the time it reaches the people who need to act on it.

The Knight Frank multifamily operational performance report captured the current state with unusual clarity. When portfolio managers and asset owners were asked how they access operational data, the results were striking.

44% stitch together data from multiple disconnected platforms

25% cannot access it at all without a staff member or third-party operator

For portfolios worth hundreds of millions of pounds, this represents a capital risk of the first order. Decision-makers are allocating resources based on data that is days, weeks, or months old — assembled by hand, reconciled across different platforms with different definitions, and delivered through intermediaries who introduce their own interpretation at every stage.

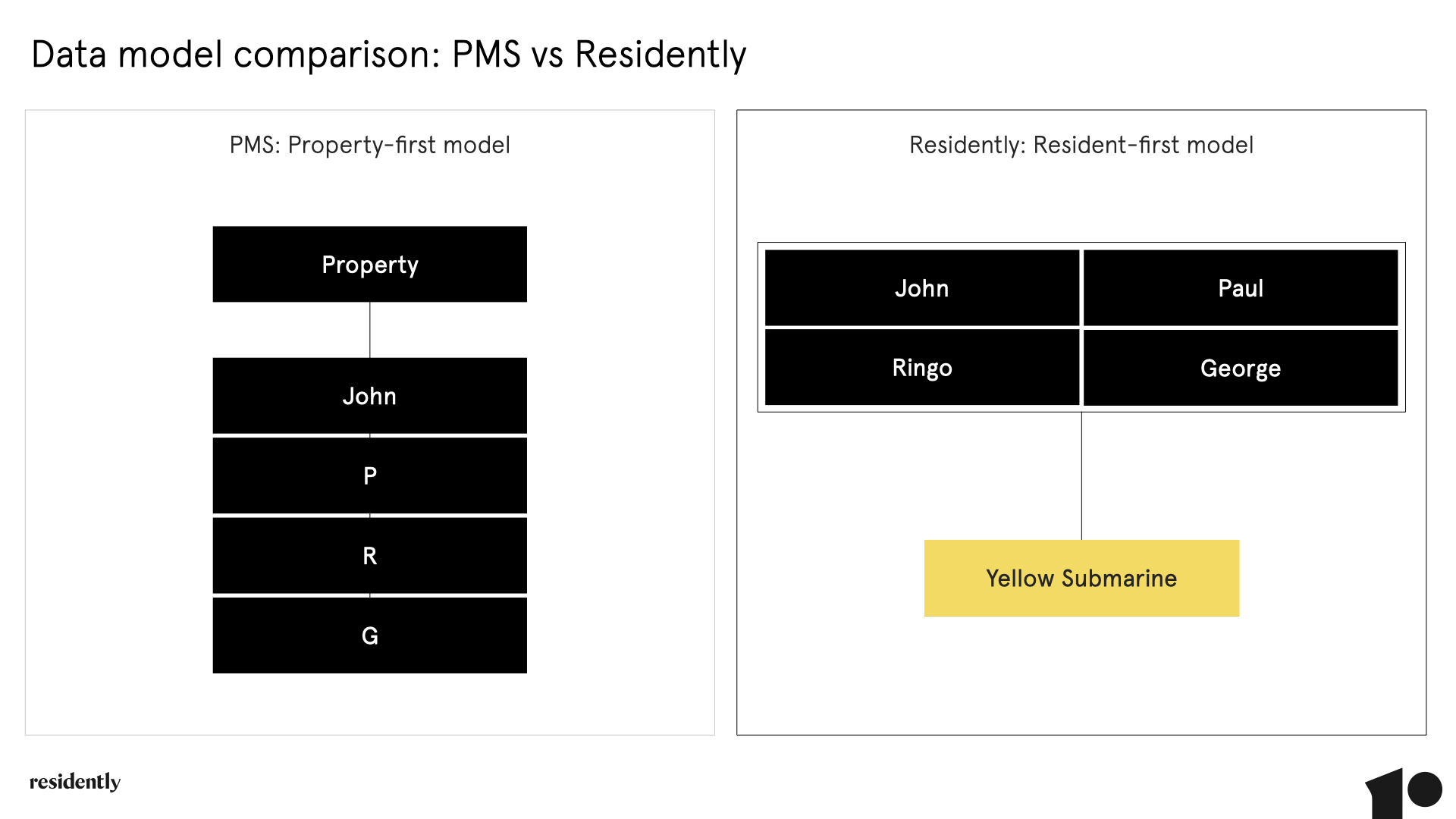

The property management system is where most operational data ultimately resides, and the first place most operators look when they attempt to build AI capability on top of their existing infrastructure. The problem is fundamental: a PMS was built for accounting. It is designed around the property as the primary record. The resident — the entity whose behaviour, satisfaction, and decisions drive nearly every financial outcome — exists in most PMS systems as a loose attachment to a property file.

The practical consequences are severe. First name fields entered as initials only. Multiple residents lumped into a single property record without individual profiles. Manual data entry that accumulates typos, duplications, and outdated contact information over years of operation. The data is there, technically — but it is incomplete, incorrectly formatted, and rarely structured in a way that supports operational decision-making, let alone AI modelling.

"Asset managers trying to extract meaningful performance metrics from a traditional PMS often find themselves working with numbers they can't fully trust. Legacy property management systems were built for accounting. They hold significant data, but it is frequently incomplete, incorrectly formatted, or simply not structured in a way that makes it usable for operational decision-making."

Feeding an AI model with this kind of data does not produce poor results — it produces confidently wrong results, which are worse. Personalisation fails because resident names and contact preferences are incorrect. Predictive models misfire because occupancy history is incomplete. Automated communications stumble because the system cannot distinguish between four residents sharing an address.

Across the residential real estate sector, operators have attempted to compensate for the limitations of their PMS by layering specialist tools on top of it — separate platforms for leasing, maintenance, resident communication, marketing analytics, and community engagement. Each tool addresses a genuine operational need. Collectively, they create an infrastructure problem that is, in many ways, worse than the PMS alone.

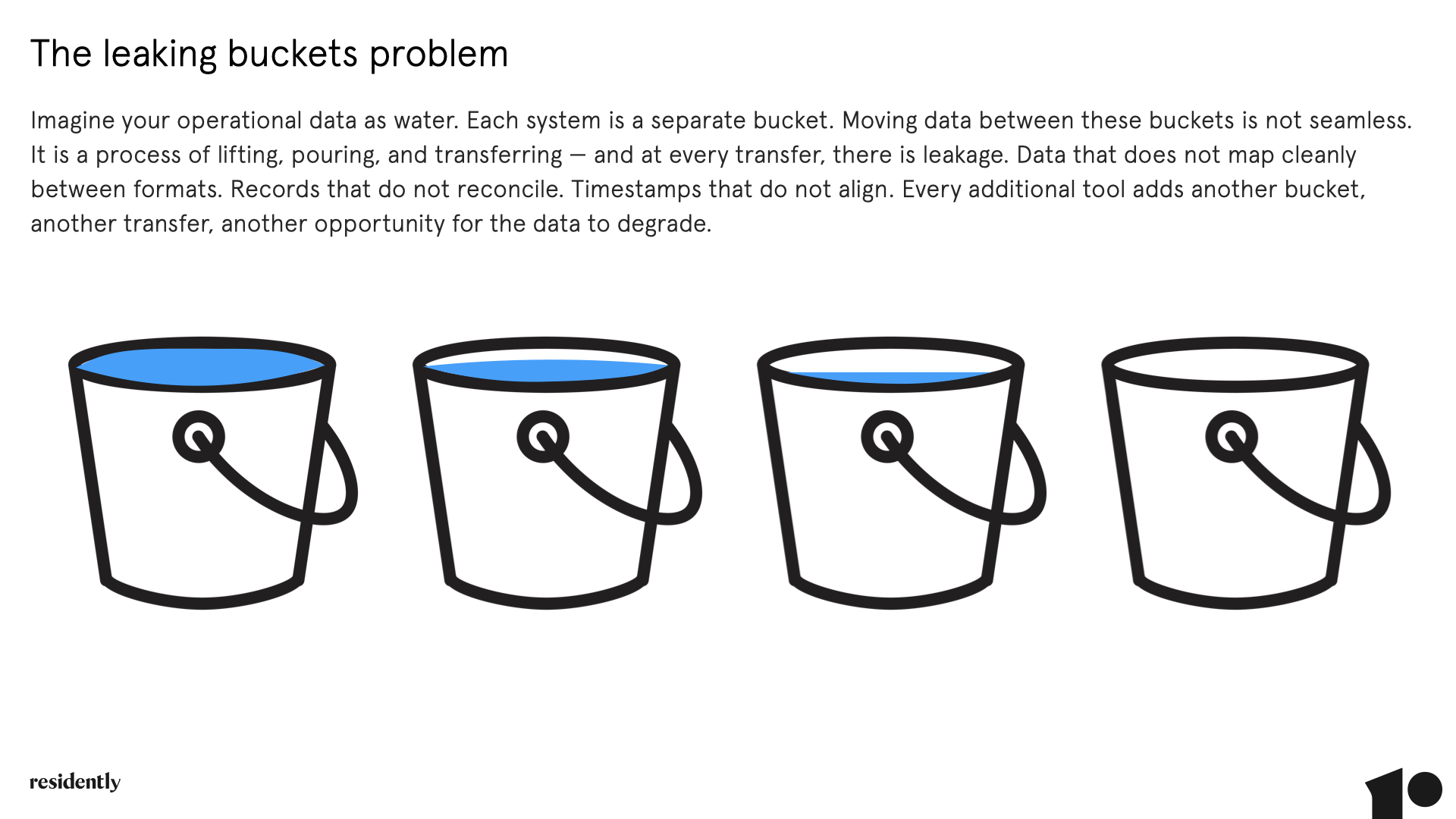

The analogy is direct: imagine your operational data as water. Each system — your PMS, your leasing tool, your maintenance platform, your communications suite — is a separate bucket. Moving data between these buckets is not seamless. It is a process of lifting, pouring, and transferring — and at every transfer, there is leakage. Data that does not map cleanly between formats. Records that do not reconcile. Timestamps that do not align. Every additional tool adds another bucket, another transfer, another opportunity for the data to degrade.

Each transfer loses data integrity. The more buckets, the more leakage

The deeper problem is that reconciling data across five or six platforms with different reporting logic is not analysis — it is archaeology. What "void rate" means in one system is not necessarily what it means in another. Benchmarking becomes guesswork. Strategic oversight becomes impossible. And any AI model built on top of this fragmented foundation is working with data that has been degraded at every step of its journey.

Clean, organised data is vital. But there is a second condition for AI to work that is rarely discussed: teams must trust it.

Even when data is technically available, operational teams that have spent years compensating for inaccurate systems develop a healthy — and often well-founded — scepticism about the numbers in front of them. They know that the PMS sometimes has duplicate records. They know that the leasing platform's void rate does not match the asset manager's spreadsheet. They work around the systems rather than within them.

This creates a critical failure mode for AI adoption. If teams do not trust the underlying data, they will not trust AI-generated recommendations that depend on it — and they are right not to. You cannot automate a process that people have learned to double-check manually. The AI adds a layer of apparent sophistication while the real workflow continues on paper, in email, and in spreadsheets.

Rebuilding trust in operational data requires more than a technical solution. It requires standardising the workflows that generate the data in the first place. When the process that captures a lead enquiry is the same across every property, every geography, and every management team — when the same fields are completed in the same format every time — the data produced becomes inherently trustworthy. Not because someone cleaned it after the fact, but because the conditions for bad data were removed at source.

The solution to the AI data problem in residential real estate is not a reporting layer. It is not a data warehouse. It is not a better dashboard bolted on top of a broken architecture. It is a rental operating system built on the right data model from the ground up.

A PMS is built around the property as the primary record. In practice, this means resident data lives inside a property record — often incomplete, frequently duplicated, and rarely structured with the granularity that modern operational intelligence requires. A PMS might say you have "one property with four residents," but in its database, those residents exist only as loose attachments to a property file.

Residently inverts this model. Our master record is the resident — complete with all personally identifiable data captured during onboarding, then tied to the property. The result is four fully profiled residents connected to one property: a structure that unlocks the complete, accurate, and actionable dataset that AI requires to perform.

What Residently has effectively done is embed master data management (MDM) principles directly into the rental operating system. In MDM terms, the platform creates a "golden record" for each resident — cleansed, enriched, and synchronised across all workflows. Every interaction from marketing to maintenance enriches that master record. No duplicated or conflicting records. Data that is always complete, accurate, and up to date.

This is not merely tidy record-keeping. It is the foundation that AI requires to work. Entity resolution at onboarding ensures that no downstream AI model is polluted with bad data. Attribute completeness means each resident record captures both structured identifiers — lease dates, preferences, service history — and unstructured behavioural data such as messages, sentiment, and maintenance requests, creating richer training sets for AI models. Bi-directional synchronisation with the PMS via API layer ensures the master resident record remains authoritative without disrupting existing infrastructure.

The best part is that operators do not need to replace existing systems to get AI-ready. Residently is PMS, CRM, and data agnostic — integrating directly into existing stacks without disrupting daily operations. We have also built specialist data preparation tools to move from PMS data chaos to AI-ready clarity before the first resident record enters the system. Using algorithms like Levenshtein distance, the platform identifies incomplete or inconsistent resident data and predicts the most likely correct values using email addresses, contracts, and any other available data sources.

There is a question worth asking any operator who has bolted an AI tool onto their existing marketing and leasing stack: what, exactly, are you automating? If the underlying process is undocumented, inconsistently followed, and varies by property manager or geography, AI does not improve it — it accelerates the inconsistency. Garbage in, garbage out is a cliché because it is true. But in property operations, the more precise version is: undefined process in, confidently automated chaos out. Automation without structure does not eliminate human error. It removes the human who might have noticed the error.

This is why documented workflows are not a nice-to-have adjacent to data quality — they are the same problem. A workflow is the specification for how data gets created. When a maintenance request is logged, what fields are mandatory? When a renewal conversation is triggered, at what point in the tenancy lifecycle, and by which signal? When a lead is qualified, what criteria must be met before it progresses? If the answer to any of these questions is "it depends on who's doing it," no AI tool can reliably act on the output.

"Clean, organized data is absolutely vital. So are workflows. Highly documented workflows. Whether for AI use or not, conferences need to start teaching 'the art of the spec' to our industry." — Grady Newman, founder of Resi, Digital Marketing Software for Multifamily Real Estate

The spec — the precise, agreed definition of how a process runs — is what transforms a sequence of human actions into something a system can learn from, replicate, and eventually automate with confidence.

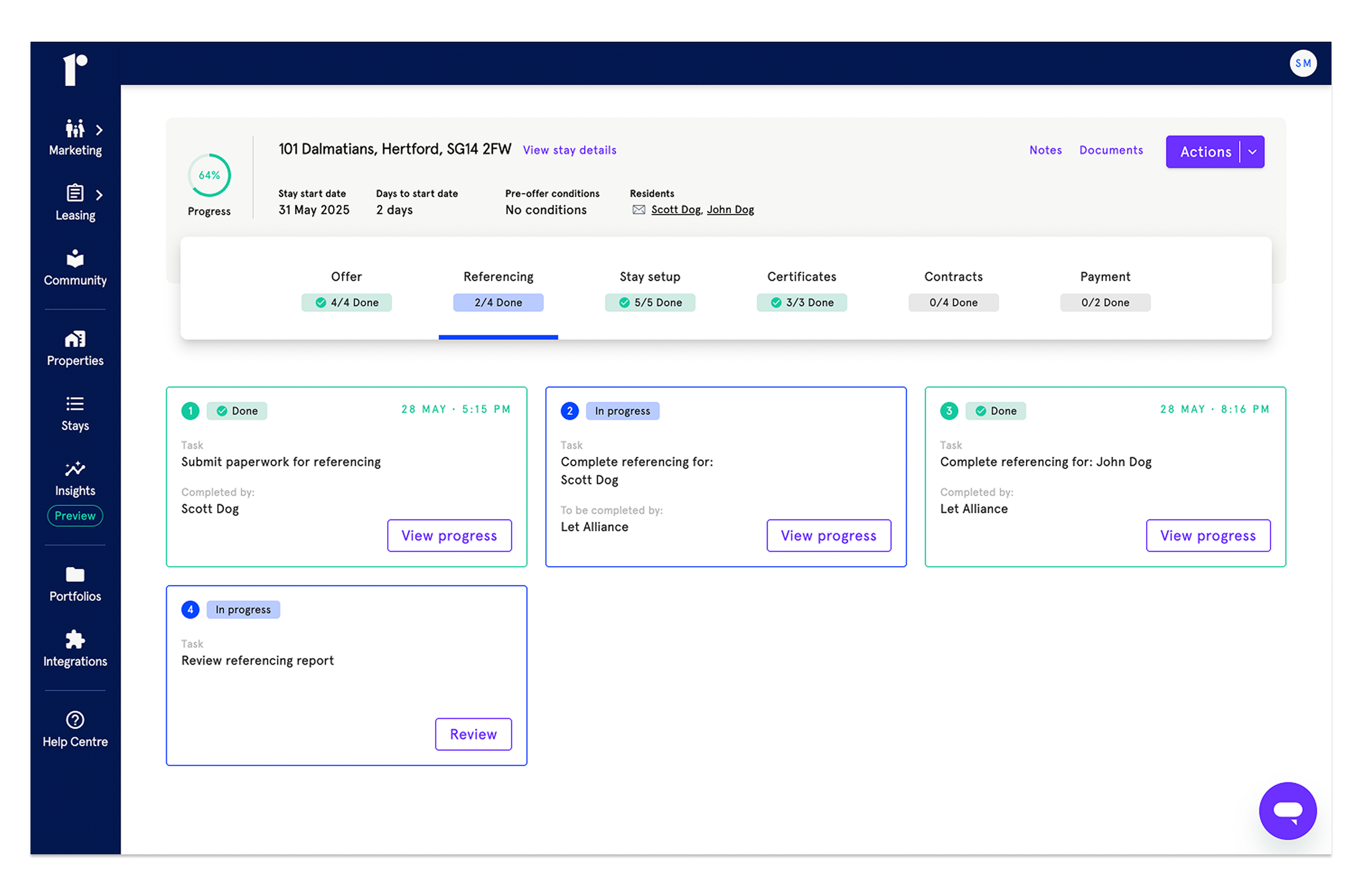

Residently's rental operating system embeds this discipline into the product itself. Every workflow — from lead enquiry to referencing, contract generation to renewal — is structured, rules-based, and consistent across every property, every team, and every geography. The system does not allow shortcuts that produce incomplete records. It does not permit the process to be followed differently in Manchester than in Birmingham. This is not rigidity for its own sake. It is the engineering of trustworthy data at source — the only approach that gives AI models the reliable, structured corpus they need to move from pilot to production. When Residently clients use AI-powered automation, they are not hoping the underlying data is clean enough. They know it is, because the workflows that produced it left no room for it to be anything else.

Two AI applications are reshaping residential real estate operations right now. Both depend entirely on the quality of the data layer beneath them.

Real estate runs on documentation — leases, contracts, applications, compliance records. These documents typically live in silos, are inconsistent in format, and require hours of manual review. AI-powered intelligent document processing converts unstructured files into structured, queryable data. Combined with retrieval-augmented generation (RAG), these systems can understand complex relationships across thousands of documents, answering questions that would previously have required days of manual analysis:

Which leases are due to expire within 90 days?

How do termination clauses differ across the portfolio?

What is the financial exposure if utility caps are exceeded?

In mid-market acquisitions, where documents are often incomplete or varied, these systems uncover risks and inconsistencies that traditional reviews miss — enabling faster due diligence and more accurate portfolio valuation. But this only works when the documents are held in a consistent format within a unified system. If leases live across five different platforms, each with different metadata structures, the AI has no coherent corpus to work from.

Resident expectations are changing. AI-powered communications tools can handle routine enquiries, manage workflows, and surface urgent issues in real time. But the use case that has the most direct financial impact is renewal prediction.

When a resident's communication history, maintenance request frequency, satisfaction signals, and lease timeline all exist within a single connected system, it becomes possible to identify churn risk months before notice is served. Automated renewal campaigns can be triggered by resident sentiment. Maintenance scheduling can be prioritised according to its impact on renewal probability. Void risk can be modelled at a portfolio level rather than managed reactively property by property.

None of this is achievable when the sentiment data is in one system, the maintenance history is in another, and the lease timeline is in a third. The AI model has no way to perform the cross-domain reasoning that makes churn prediction meaningful.

"McKinsey's latest report on AI in real estate puts into words something many of us already sense: the biggest gains won't come from adding more technology. They'll come from fundamentally rethinking how we operate. The real opportunity lies in connectivity. When data from buildings, tenant platforms and operational systems is properly integrated, the day-to-day processes that eat up time across multiple people and departments can be dramatically streamlined." — Paul A, Real Estate CEO

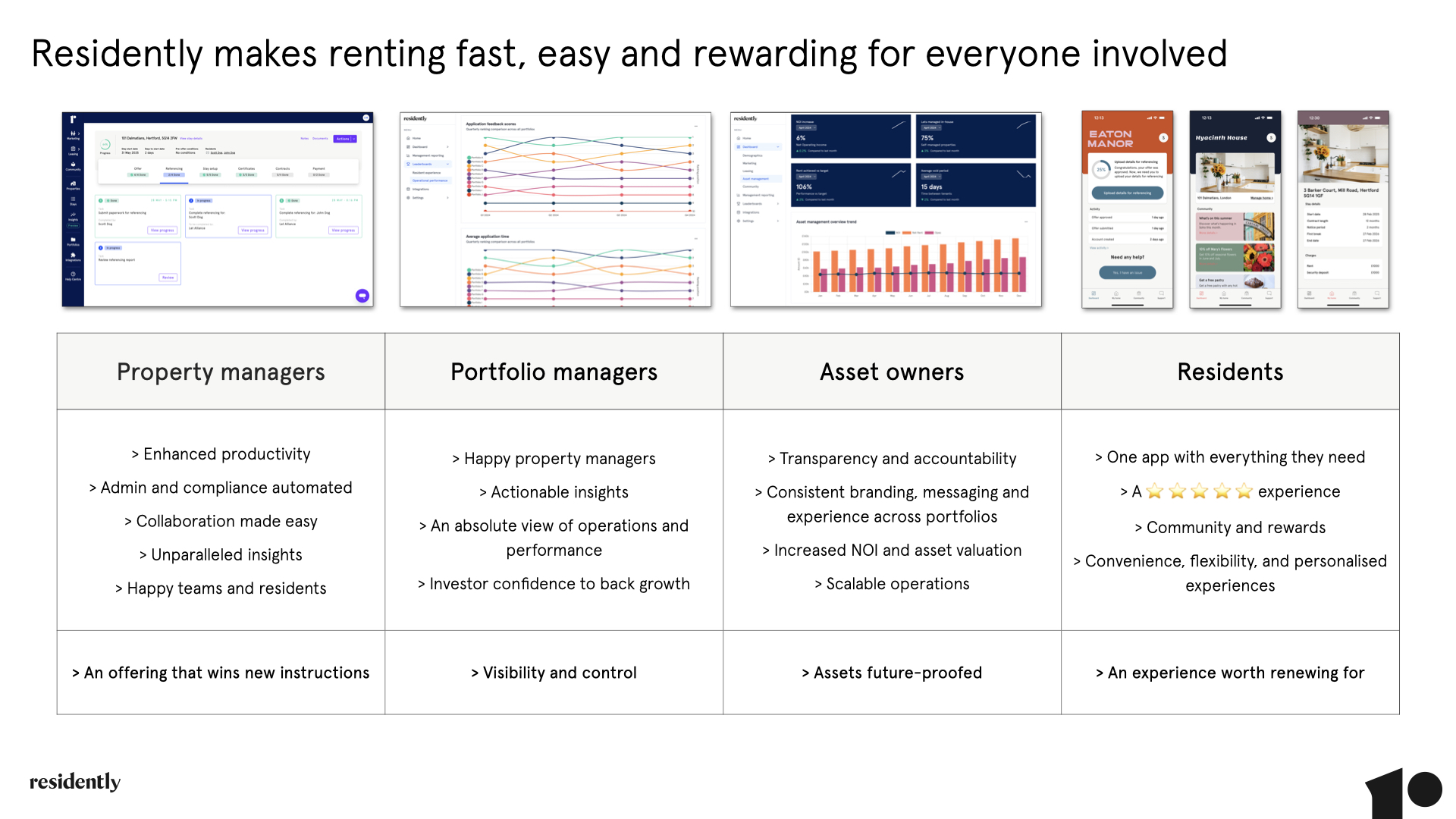

The most compelling argument for a rental operating system is not a technical one. It is that every stakeholder in the residential property chain — from resident to investor — has a clear, immediate reason to want it.

One of the most insightful observations in the AI data debate is about how to build the business case for foundational investment. The answer is not to argue for data infrastructure in the abstract. It is to show how every role across the organisation derives immediate, concrete value from solving it — so that the initial investment becomes an obvious decision rather than a budget negotiation.

"The most important approach is to prepare a thoughtful ROI analysis that highlights a broad range of use cases that deliver immediate value to a variety of stakeholders or departments across the company. The more you can show how multiple aspects of the business will benefit, the more the initial undertaking becomes a no-brainer." — Joe Stockton, Co-Founder & CEO @Oyster Data

A rental operating system is uniquely positioned to make this argument. Unlike a standalone analytics platform or a point solution, it supports every role in the journey — creating a cascade of value that compounds with adoption.

Becoming AI-ready in residential real estate is not a technology project. It is an operational transformation — one that starts with data architecture and compounds through every workflow in the business.

The sector is under pressure to modernise. AI holds genuine promise to deliver operational efficiency, resident satisfaction, and materially higher NOI. But realising that promise requires looking beyond the models and investing in the infrastructure that supports them.

The 70 to 85 per cent of AI projects that fail are not failed by technology. They are failed by data architecture that was never designed for the demands being placed on it. Legacy PMS systems built for accounting. Point solutions that fragment operational intelligence across disconnected platforms. Teams who cannot trust the numbers in front of them.

A rental operating system built on resident-first master data management, standardised workflows, and a unified platform that spans the entire tenancy lifecycle is not a technical luxury. It is the business necessity that makes everything else possible. Without it, AI is another expensive pilot that dies after 90 days. With it, it is the foundation of a new era in rental operations.

Our rental operating system, combined with Insights+, gives asset managers, portfolio managers, and investors a single source of truth across marketing, leasing, and resident engagement — in real time.

Ready to build the foundation? Talk to the Residently team.